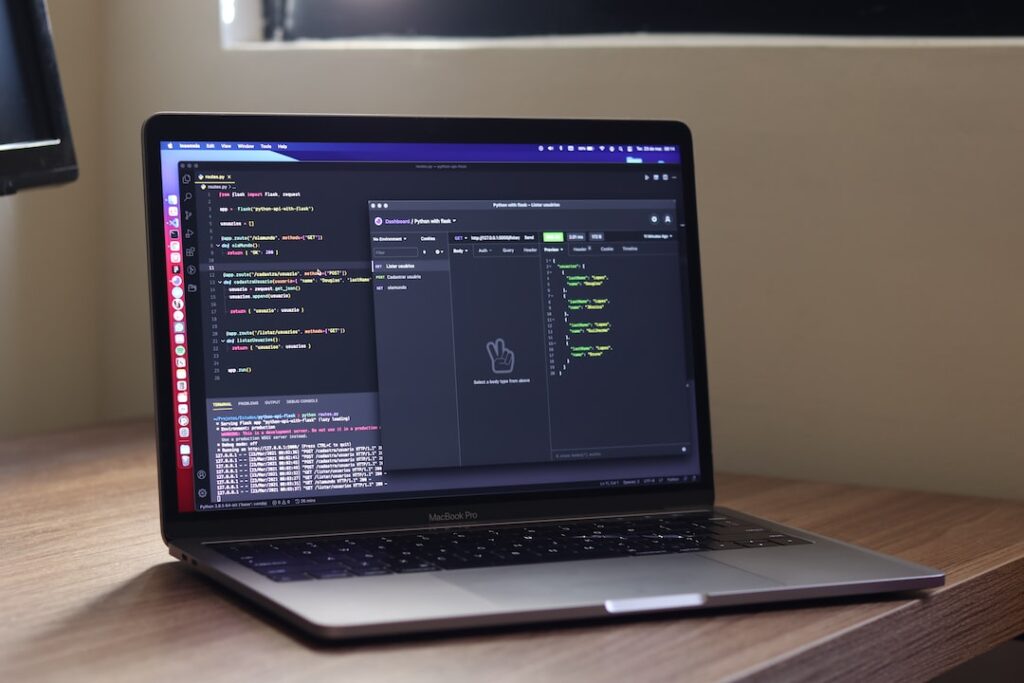

Welcome to the era of data lakehouses powered by Databricks!

Efficient data management is crucial. The convergence of data lakes and data warehouses has brought about the concept of a ‘lakehouse,’ offering the best of both worlds. Databricks, with its cutting-edge technology, has emerged as a leader in this space, enabling organizations to streamline their data architecture. This introduction aims to guide you through the process of optimizing your Databricks lakehouse implementation. From data ingestion to processing and analytics, we will explore best practices, tips, and strategies to maximize the potential of your lakehouse. By the end of this journey, you will be equipped with the knowledge and tools to harness the full power of Databricks for your data infrastructure. Let’s dive in and unlock the true potential of your data with a well-orchestrated Databricks lakehouse implementation.

Understanding Databricks Lakehouse Implementation

Key Components

Databricks Lakehouse Implementation has emerged as a popular choice for organizations looking to streamline their data architecture. Understanding the key components of this implementation is crucial for leveraging its full potential. Let’s delve into the core components that make up a Databricks Lakehouse.

- Unified Data Analytics

One of the primary components of Databricks Lakehouse is its ability to provide a unified platform for data analytics. By combining the features of data lakes and data warehouses, organizations can perform both batch and real-time analytics on the same platform, leading to faster insights and streamlined processes.

- Delta Lake

Delta Lake is another key component of Databricks Lakehouse Implementation. It offers ACID transactions, schema enforcement, and data versioning capabilities, ensuring data reliability and consistency. With Delta Lake, organizations can efficiently manage their data pipelines, improve data quality, and enhance data governance.

- MLflow

For organizations focusing on machine learning and AI capabilities, MLflow plays a crucial role in the Databricks Lakehouse Implementation. MLflow provides end-to-end machine learning lifecycle management, enabling data scientists to build, train, deploy, and monitor machine learning models seamlessly within the Lakehouse architecture.

Challenges Faced

While Databricks Lakehouse Implementation offers a comprehensive solution for modern data management, organizations may encounter certain challenges during its adoption. It is essential to be aware of these challenges to effectively address them and optimize the implementation process.

- Data Silos Integration

One of the common challenges faced during Databricks Lakehouse Implementation is integrating data silos from various sources. Organizations may struggle with consolidating data from disparate systems into a unified platform, leading to data inconsistency and inefficiencies. Implementing robust data integration strategies is crucial to overcoming this challenge.

- Scalability and Performance

Scalability and performance issues can also arise when implementing Databricks Lakehouse, especially with the increasing volume and velocity of data. Organizations need to design scalable architectures and optimize query performance to ensure smooth operations and timely delivery of insights.

- Data Governance and Security

Maintaining data governance and security standards is a critical challenge in Databricks Lakehouse Implementation. Ensuring data privacy, compliance with regulations, and implementing robust security measures are essential components of a successful Lakehouse architecture.

Understanding the key components and challenges of Databricks Lakehouse Implementation is vital for organizations aiming to harness the power of modern data analytics. By addressing these components and challenges effectively, organizations can build a robust data architecture that drives innovation, efficiency, and data-driven decision-making.

Steps to Streamline Databricks Lakehouse Implementation

Optimizing Data Ingestion

Efficient data ingestion is crucial for a successful Databricks Lakehouse implementation. In this section, we will delve into the importance of optimizing data ingestion processes to ensure seamless and timely loading of data into the Lakehouse environment. Explore advanced data extraction techniques, such as Change Data Capture (CDC) and real-time ingestion pipelines, to stay up-to-date with the latest data. Additionally, learn about data transformation best practices, including schema evolution and data enrichment, to ensure that your data is ready for analysis. Discover optimization strategies for data loading, such as parallel processing and data partitioning, to enhance the overall efficiency and scalability of your Databricks Lakehouse.

Improving Data Quality and Governance

Maintaining high data quality and robust governance practices is paramount for the integrity and reliability of your data assets. In this section, we will discuss strategies for assessing data quality, conducting comprehensive data profiling, and implementing effective metadata management within your Databricks Lakehouse architecture. Learn how to establish data quality metrics, enforce data validation rules, and create data lineage documentation to ensure data accuracy and consistency. Furthermore, explore the importance of data governance frameworks in defining data ownership, access controls, and regulatory compliance to support data-driven decision-making.

Enhancing Performance with Databricks

To unlock the full potential of Databricks for your Lakehouse, focus on enhancing performance. Discover advanced techniques to optimize query performance, such as query caching and query tuning, to reduce query execution times and improve overall system responsiveness. Learn how to leverage Databricks clusters effectively by configuring auto-scaling and optimizing resource allocation based on workload demands. Additionally, explore the benefits of data caching mechanisms, such as Delta caching, to accelerate query processing and boost overall system performance. By fine-tuning your queries, optimizing resource utilization, and implementing performance best practices, you can maximize the speed, efficiency, and scalability of your data processing tasks within the Databricks environment.

Best Practices for Efficient Implementation

The efficient implementation of projects is paramount for organizations striving to stay ahead in the competitive market. By adhering to a set of best practices, businesses can optimize their processes, bolster security measures, and maintain peak performance levels.

Automation and Orchestration: Streamlining Workflow Processes

A cornerstone of efficient implementation lies in the strategic utilization of automation and orchestration tools. Automation significantly reduces manual intervention, enhances accuracy, and accelerates task completion. Meanwhile, orchestration facilitates seamless coordination among disparate systems and services, culminating in improved operational efficiency and heightened productivity.

Security Measures: Safeguarding Data and Systems

The implementation of stringent security measures is non-negotiable when it comes to protecting sensitive data and systems from potential threats. Robust security protocols encompass routine security evaluations, encryption of critical information, stringent access controls, and compliance with industry standards and regulations. Prioritizing security fortifies organizations against data breaches, upholds customer trust, and safeguards the integrity of their operations.

Monitoring and Maintenance: Ensuring Long-Term Efficiency

Sustained operational efficiency hinges on continuous monitoring and proactive maintenance practices. Monitoring tools are instrumental in promptly identifying performance bottlenecks, potential failures, and operational glitches in real-time, enabling swift remedial actions. Routine maintenance activities, such as software updates, system patches, and hardware upgrades, are indispensable for averting downtime and optimizing overall system performance.

Moreover, embracing a culture of innovation and continuous improvement is vital for organizations seeking to enhance their operational effectiveness. Encouraging employees to propose and implement process enhancements fosters a dynamic and adaptive work environment, driving innovation and efficiency across projects.

Collaboration among cross-functional teams plays a pivotal role in ensuring the seamless execution of projects. Effective communication channels, shared goals, and a collaborative mindset are essential for breaking down silos and promoting synergy among different departments, leading to cohesive project outcomes and streamlined workflows.

Furthermore, investing in employee training and skill development initiatives is key to nurturing a competent workforce capable of navigating complex project requirements. Equipping employees with the latest tools, technologies, and best practices empowers them to deliver high-quality work, adapt to evolving industry trends, and drive organizational success.

By incorporating these additional strategies alongside automation, security measures, and monitoring practices, organizations can establish a comprehensive framework for efficient implementation, fostering innovation, collaboration, and continuous growth to thrive in today’s competitive business landscape.

Conclusion

Streamlining your Databricks Lakehouse implementation is essential for maximizing efficiency, scalability, and performance in your data analytics processes. By leveraging the powerful capabilities of Databricks, organizations can unify data engineering, data science, and business analytics on a single platform, driving better insights and decision-making. Embracing best practices, optimizing workflows, and ensuring collaboration across teams are key factors in achieving success with your Databricks Lakehouse implementation. By focusing on continuous improvement and innovation, businesses can stay ahead in the ever-evolving landscape of data management and analytics.