Unlocking a New Realm of Data Processing Capabilities

Unlocking a new realm of data processing capabilities, the integration of Python stored procedures within Snowflake’s ecosystem offers a game-changing approach to enhancing data workflows. By tapping into Snowpark’s functionality, data practitioners can harness the power of custom Python code directly within Snowflake, revolutionizing data transformation processes. This exploration highlights the myriad advantages that arise from adopting Snowpark Python stored procedures, ranging from heightened operational efficiency and scalability to seamless data manipulation. Real-world scenarios underscore the indispensable role of Snowpark Python stored procedures in optimizing data pipelines and facilitating advanced analytical undertakings. Whether navigating intricate data landscapes or steering complex analytics initiatives, mastering the art of incorporating Python stored procedures within Snowflake empowers professionals to expedite insights, elevate decision-making, and elevate overall data governance.

Key Advantages of Snowpark Python Stored Procedures

Enhanced Performance

In the realm of data processing and analytics, performance is paramount. Snowpark Python Stored Procedures offer a significant boost in performance compared to traditional methods. By leveraging the power of Python within Snowflake, these stored procedures can process data more efficiently, leading to faster insights and actionable results.

Flexible Data Processing Capabilities

One of the key advantages of Snowpark Python Stored Procedures is their flexibility in handling diverse data processing tasks. Whether it’s simple data transformations or complex analytical operations, Python’s versatility combined with Snowflake’s capabilities allows for a wide range of data processing tasks to be efficiently executed.

Seamless Integration with Existing Systems

Snowpark Python Stored Procedures seamlessly integrate with existing systems and workflows. This compatibility ensures that organizations can leverage their current infrastructure and tools while harnessing the power of Python for data processing within Snowflake. The ability to integrate with existing systems also facilitates a smoother transition to adopting Snowpark Python Stored Procedures for enhanced data processing capabilities.

Scalability and Resource Optimization

Another significant advantage of Snowpark Python Stored Procedures is their scalability and resource optimization. As data volumes grow and processing requirements increase, these stored procedures can scale seamlessly to handle the workload efficiently. This scalability ensures that organizations can continue to process data effectively without being limited by infrastructure constraints. Moreover, the resource optimization capabilities of Snowpark Python Stored Procedures help in maximizing the utilization of computing resources, leading to cost savings and improved performance.

Extensibility and Customization

Snowpark Python Stored Procedures offer extensibility and customization options that empower organizations to tailor data processing tasks according to their specific requirements. By allowing custom Python scripts to be integrated seamlessly within Snowflake, businesses can create bespoke data processing workflows that address unique challenges and demands. This level of customization not only enhances the efficiency of data processing but also enables organizations to derive more value from their data assets.

Enhanced Data Security

Data security is a critical aspect of data processing operations, and Snowpark Python Stored Procedures prioritize the security of sensitive information. With built-in security features and compliance measures, these stored procedures ensure that data processing activities adhere to industry standards and regulations. By leveraging Snowflake’s robust security capabilities alongside Python’s encryption and access control functionalities, organizations can maintain the confidentiality and integrity of their data throughout the processing pipeline.

The key advantages of Snowpark Python Stored Procedures encompass enhanced performance, flexible data processing capabilities, seamless integration with existing systems, scalability, resource optimization, extensibility, customization, and enhanced data security. By leveraging these benefits, organizations can streamline their data processing workflows, accelerate insights generation, and drive informed decision-making processes.

Implementing Snowpark Python Stored Procedures

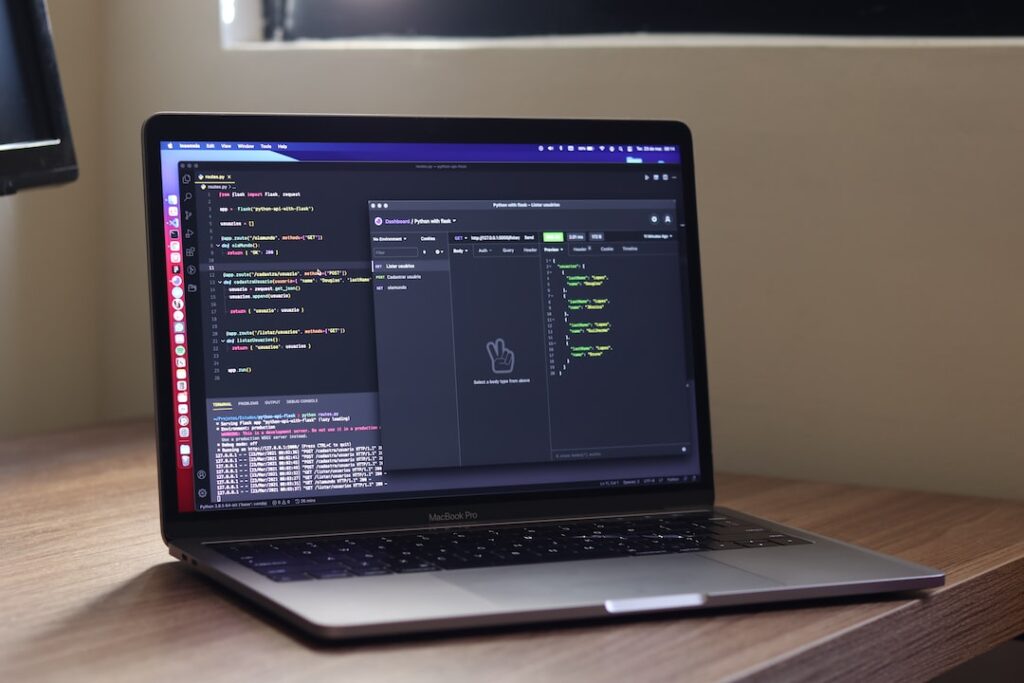

Setting Up the Snowpark Environment

Implementing Python stored procedures in Snowflake using Snowpark requires setting up the right environment. This involves installing Snowpark, configuring it to work with Python, and ensuring compatibility with Snowflake. We will walk through the step-by-step process of setting up the Snowpark environment, including installing necessary tools, configuring the environment variables, and verifying the setup to ensure a smooth implementation. Additionally, we will discuss the importance of version compatibility between Snowpark, Python, and Snowflake to avoid any potential conflicts during implementation.

Creating and Executing Python Stored Procedures

After the Snowpark environment is successfully set up, the next step is to dive into creating and executing Python stored procedures within Snowflake. We will cover the syntax required for creating these procedures, delve into best practices for structuring the code, and provide practical examples to demonstrate how Python logic can be seamlessly integrated into Snowflake for efficient data processing. Understanding the lifecycle of a Python stored procedure, error handling, and debugging techniques will also be discussed to ensure a robust implementation. Moreover, we will explore the integration of third-party libraries within Python stored procedures and highlight the security considerations when executing external code in Snowflake.

Optimizing Data Processing Efficiency

Efficiency plays a crucial role in data processing tasks. To optimize the performance of Python stored procedures in Snowflake, we will explore advanced techniques and strategies. This includes leveraging parallel processing for handling large datasets, utilizing in-memory data processing for faster computations, and implementing caching mechanisms to reduce query times. We will also discuss how to fine-tune Python code for better performance, explore ways to minimize resource consumption, and provide insights on monitoring and optimizing the efficiency of Python stored procedures in Snowflake. Furthermore, we will delve into the concept of query optimization within Snowflake specifically for Python stored procedures, covering indexing strategies, query execution plans, and performance tuning options to enhance data processing speed and overall system efficiency.

Applications of Snowpark Python Stored Procedures

Real-time Data Analysis

Snowpark Python stored procedures can be used for real-time data analysis by leveraging the power of Snowflake’s data processing capabilities. These procedures can efficiently process large volumes of data in real-time, enabling businesses to make informed decisions quickly.

Batch Processing Scenarios

Snowpark Python stored procedures are also ideal for batch processing scenarios where large datasets need to be processed in a scalable and efficient manner. By writing custom Python code within Snowflake, businesses can automate and streamline their batch processing workflows for improved productivity.

Data Transformation Use Cases

Furthermore, Snowpark Python stored procedures can be utilized for various data transformation use cases. Whether it’s cleansing data, aggregating information, or performing complex transformations, these procedures offer a flexible and powerful solution for transforming data within Snowflake’s environment.

Enhanced Scalability

One of the key advantages of utilizing Snowpark Python stored procedures is the enhanced scalability they offer. As businesses deal with ever-growing datasets, the ability to scale data processing operations becomes crucial. Snowpark Python procedures, integrated within Snowflake, provide a scalable solution that can handle increasing data volumes without sacrificing performance.

Integration with Snowflake Ecosystem

Snowpark Python stored procedures seamlessly integrate with the Snowflake ecosystem, allowing for a seamless workflow within the Snowflake environment. This integration enables easy access to Snowflake’s data warehousing capabilities, ensuring that businesses can leverage the full potential of Snowflake alongside the power of Python for data processing.

Advanced Analytics Capabilities

In addition to traditional data processing tasks, Snowpark Python stored procedures bring advanced analytics capabilities to the table. Businesses can implement sophisticated analytical models and algorithms using Python code within Snowflake, enabling them to extract valuable insights from their data efficiently.

Cost-Effective Solution

Snowpark Python stored procedures offer a cost-effective solution for data processing and analysis. By leveraging Python’s versatility and Snowflake’s cloud-based architecture, businesses can achieve high-performance data processing at a fraction of the cost compared to traditional on-premises solutions.

Conclusion

The applications of Snowpark Python stored procedures extend beyond basic data processing tasks. From real-time data analysis to complex data transformations and advanced analytics, these procedures empower businesses to unlock the full potential of their data within the Snowflake environment. With enhanced scalability, seamless integration, advanced analytics capabilities, and cost-effective solutions, Snowpark Python stored procedures are a valuable asset for businesses looking to drive data-driven decision-making and innovation.

Overcoming Challenges and Solutions

Managing Large Datasets Effectively

Managing large datasets has become a common challenge for many organizations. This section will delve into strategies and tools that can help businesses effectively handle vast amounts of data, ensuring smooth operations and optimal performance.

From cloud-based storage solutions to big data analytics platforms, businesses have a plethora of options to manage their large datasets efficiently. Implementing data compression techniques, utilizing data partitioning methods, and employing parallel processing are some of the strategies that can enhance data processing speed and minimize storage costs. Additionally, adopting data governance frameworks and establishing clear data quality standards are vital in ensuring the accuracy and reliability of large datasets.

Ensuring Data Security Measures

Data security is a critical concern for businesses of all sizes. This part of the blog will explore the importance of implementing robust data security measures to protect sensitive information from cyber threats and unauthorized access. Various encryption techniques, access controls, and best practices will be discussed.

Data breaches pose a significant risk to businesses. Implementing a multi-layered approach to data security, including encryption at rest and in transit, role-based access controls, and regular security audits, is essential in safeguarding valuable data assets. Furthermore, raising employee awareness about data security best practices through training programs and conducting regular security assessments can help mitigate potential vulnerabilities.

Resolving Common Issues

Despite advancements in technology, businesses often encounter common issues when dealing with data management and analytics. This segment will focus on identifying these challenges and providing practical solutions to address them. From troubleshooting data inconsistencies to optimizing data workflows, readers will gain insights into overcoming hurdles in data processing and analysis.

Data integrity issues, such as duplicate records and missing values, can impact the accuracy of analytical results. Implementing data validation processes, data cleansing techniques, and automated data quality checks can help in maintaining data consistency and reliability. Additionally, leveraging data visualization tools for exploratory data analysis and incorporating machine learning algorithms for predictive analytics can streamline data processing tasks and uncover valuable insights.

Effectively managing large datasets, ensuring robust data security measures, and addressing common data management issues are crucial for organizations to harness the full potential of their data assets. By implementing best practices, leveraging innovative technologies, and fostering a data-driven culture, businesses can overcome challenges and achieve sustainable growth in today’s competitive landscape.

Conclusion

Embracing Snowpark Python stored procedures can significantly enhance data processing capabilities by leveraging the power and flexibility of Python within Snowflake. This integration enables seamless data manipulation, transformation, and analysis, ultimately empowering organizations to derive valuable insights and drive informed decision-making. As Snowpark continues to evolve, the opportunities for optimizing data processing workflows using Python stored procedures are limitless, paving the way for enhanced efficiency and productivity in the realm of data analytics.