The Evolution of Machine Learning

In the rapidly evolving landscape of machine learning, a paradigm shift is underway that is reshaping how models are trained. With the exponential growth of big data and the increasing complexity of algorithms, the conventional method of training models on a single machine is proving inadequate for today’s AI applications. Instead, a new approach centered around distributing the computational workload across multiple machines or devices is gaining prominence. This distributed model training strategy offers not only improved scalability and performance but also enables parallel processing, leading to quicker convergence and enhanced efficiency. As organizations strive to develop more advanced AI models for real-world deployment, embracing these distributed training techniques has become essential. By leveraging this innovative methodology, practitioners can unlock the full potential of AI technologies, driving innovation and propelling industries forward into a new era of machine learning excellence.

Challenges in Distributed Model Training

Communication Overhead: Managing Data Exchange Efficiency

In the realm of distributed model training, one of the foremost challenges that practitioners encounter is the communication overhead. The process of training a model across multiple nodes or devices necessitates continuous communication and data exchange between these entities. This constant back-and-forth can lead to increased latency and reduced overall efficiency. To tackle this challenge effectively, various strategies can be employed, such as optimizing network traffic, implementing efficient data transfer protocols, and reducing unnecessary communication. By streamlining the communication process, it becomes possible to enhance the efficiency and speed of distributed model training.

Synchronization Issues: Ensuring Consistency and Convergence

Another critical hurdle in the domain of distributed model training is managing synchronization issues. With multiple nodes involved in the training process, ensuring that all nodes are synchronized and working on the most up-to-date version of the model can be a complex task. Techniques like parameter server architectures, asynchronous training methods, and meticulous synchronization protocols are commonly utilized to address this challenge. By carefully managing synchronization, practitioners can ensure consistency in model updates across all nodes, thereby facilitating convergence and enhancing training efficiency.

Scalability Concerns: Addressing Growing Data and Model Complexity

Scalability poses a significant concern in distributed model training, particularly when dealing with vast datasets and complex models. It is crucial to ensure that the training process can scale effectively as the volume of data and model intricacy increases. Techniques such as model parallelism, data parallelism, and the utilization of distributed training frameworks play a pivotal role in addressing scalability concerns in distributed model training. By leveraging these scalable strategies, practitioners can effectively manage large-scale training tasks, optimize resource utilization, and achieve efficient distributed model training.

Ensuring Data Privacy and Security

In the context of distributed model training, maintaining data privacy and security is of paramount importance. As data is distributed across multiple nodes, ensuring that sensitive information remains protected throughout the training process is essential. Encryption techniques, secure communication channels, and access control mechanisms are vital components in safeguarding data privacy. By implementing robust security measures, practitioners can mitigate the risks associated with data breaches and unauthorized access, thereby fostering trust and compliance in distributed model training environments.

Handling Heterogeneous Computing Resources

Distributed model training often involves the utilization of heterogeneous computing resources, including CPUs, GPUs, and specialized accelerators. Managing the diverse capabilities and limitations of these resources presents a unique challenge. Techniques like resource-aware scheduling, workload balancing, and dynamic resource allocation are employed to optimize resource utilization and enhance overall performance. By effectively coordinating heterogeneous computing resources, practitioners can maximize the efficiency of distributed model training workflows and achieve faster convergence rates.

Adapting to Network Variability and Failures

The dynamic nature of network environments introduces variability and potential failures that can impact the performance of distributed model training. Addressing network fluctuations, packet losses, and node failures requires robust fault tolerance mechanisms and adaptive networking protocols. Strategies such as fault recovery mechanisms, network redundancy, and distributed consensus algorithms play a crucial role in ensuring the reliability and resilience of distributed training workflows. By proactively adapting to network challenges, practitioners can minimize disruptions, maintain training continuity, and achieve consistent performance outcomes.

Incorporating Federated Learning Principles

Federated learning principles offer a decentralized approach to collaborative model training, where data remains localized on individual devices or nodes. By incorporating federated learning techniques into distributed model training frameworks, practitioners can leverage the advantages of data privacy preservation, reduced communication overhead, and enhanced scalability. Federated learning enables collaborative model training across distributed entities while preserving data integrity and confidentiality. By embracing federated learning principles, practitioners can address challenges related to data silos, privacy regulations, and data ownership concerns in distributed model training scenarios.

Optimizing Hyperparameter Tuning and Model Selection

Efficient hyperparameter tuning and model selection are crucial aspects of successful distributed model training. The selection of appropriate hyperparameters and model architectures significantly influences training performance and convergence rates. Techniques like automated hyperparameter optimization, Bayesian optimization, and model selection strategies play a pivotal role in enhancing training efficiency and model accuracy. By systematically optimizing hyperparameters and selecting optimal model configurations, practitioners can expedite the training process, improve generalization capabilities, and achieve superior model performance in distributed training settings.

Addressing Data Imbalance and Bias Challenges

Dealing with data imbalance and bias issues is a common concern in distributed model training, especially when working with diverse and unbalanced datasets. Addressing data distribution disparities and bias in training data is essential to prevent model skewness and improve prediction accuracy. Techniques like data augmentation, class weighting, and bias mitigation strategies are employed to handle data imbalance and bias challenges effectively. By addressing these issues proactively, practitioners can enhance model fairness, reduce prediction errors, and promote inclusivity in distributed model training applications.

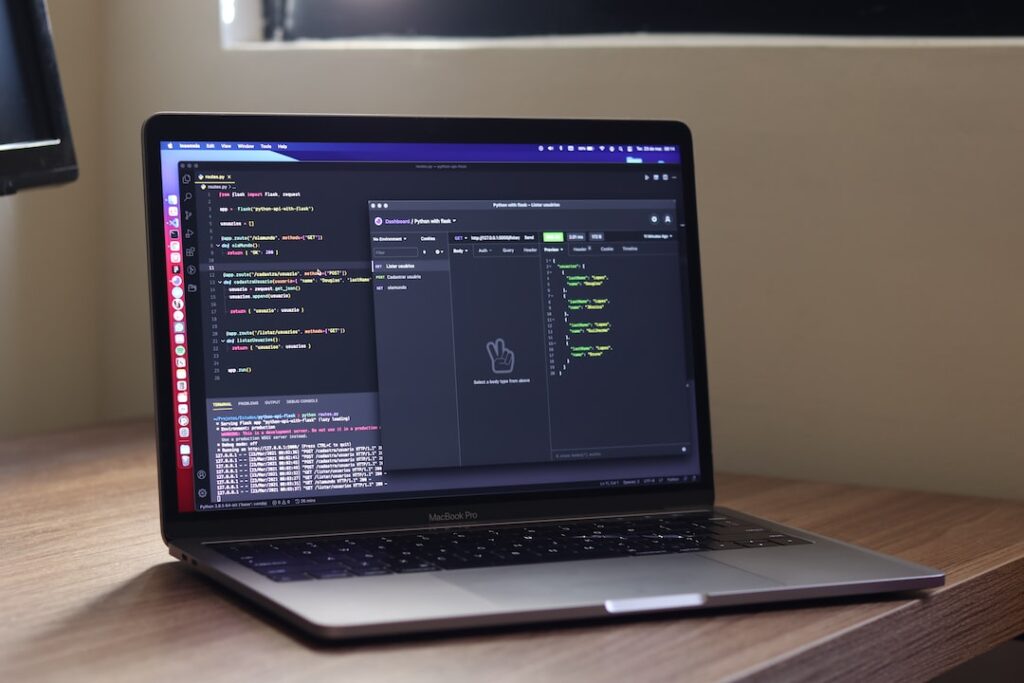

Monitoring and Visualizing Training Progress

Monitoring and visualizing training progress are integral components of effective distributed model training workflows. Tracking key performance metrics, convergence rates, and resource utilization indicators provide valuable insights into the training process. Visualization tools, dashboards, and real-time monitoring systems enable practitioners to assess model performance, identify bottlenecks, and make informed decisions to optimize training outcomes. By incorporating robust monitoring and visualization mechanisms, practitioners can enhance transparency, accountability, and performance optimization in distributed model training environments.

Collaborative Knowledge Sharing and Research Advancements

Promoting collaborative knowledge sharing and research advancements is essential for fostering innovation and progress in distributed model training. Engaging in knowledge exchange, sharing best practices, and collaborating on research initiatives enable practitioners to stay abreast of the latest developments and emerging trends in the field. Participation in community forums, research conferences, and collaborative projects facilitates cross-disciplinary learning and promotes the dissemination of cutting-edge research findings. By actively participating in knowledge-sharing activities and contributing to research advancements, practitioners can drive innovation, expand the collective knowledge base, and propel the evolution of distributed model training methodologies.

Conclusion

Navigating the challenges of distributed model training requires a multifaceted approach that encompasses communication efficiency, synchronization management, scalability optimization, security considerations, resource utilization, fault tolerance, federated learning principles, hyperparameter tuning, bias mitigation, monitoring capabilities, and collaborative research endeavors. By addressing these challenges proactively and implementing effective strategies, practitioners can unlock the full potential of distributed model training, achieve superior performance outcomes, and drive innovation in the realm of machine learning and artificial intelligence.

Advancements in Distributed Model Training

Decentralized Training Approaches

The traditional centralized approach to model training involves aggregating data from multiple sources to a central server for processing. However, decentralized training approaches distribute the training process across multiple nodes or devices, allowing for parallel processing and reducing the need for large-scale data transfers. This shift towards decentralization has been driven by the growing volume of data and the need for more efficient training methods. By distributing the workload across multiple nodes, decentralized training can enhance scalability and reduce the risk of bottlenecks that often occur in centralized systems.

Efficient Parameter Servers

Parameter servers play a crucial role in distributed model training by storing and updating model parameters. Recent advancements have focused on improving the efficiency of parameter servers through techniques such as model sharding, which divides the model parameters across multiple servers to reduce communication overhead and improve scalability. Additionally, advancements in parameter server architectures, such as the use of hybrid memory systems and smarter data partitioning strategies, have contributed to faster training times and improved resource utilization.

Federated Learning Techniques

Federated learning is a decentralized approach to model training where the model is trained locally on device data, and only the model updates are shared with a central server. This technique helps address privacy concerns associated with centralized training and enables training on data that cannot be easily transferred due to size or sensitivity. Recent developments in federated learning have focused on improving communication efficiency, security, and scalability to support large-scale distributed training scenarios. Innovations such as differential privacy mechanisms, secure aggregation protocols, and adaptive learning rate scheduling have enhanced the effectiveness of federated learning in real-world applications. The evolution of federated learning frameworks and the integration of edge computing technologies have further extended the capabilities of decentralized training, enabling the training of complex models on a diverse range of devices.

The advancements in distributed model training have revolutionized the way machine learning models are trained and deployed. Decentralized training approaches, efficient parameter servers, and federated learning techniques have paved the way for scalable, secure, and privacy-preserving model training in distributed environments. As the field continues to evolve, researchers and practitioners are exploring new avenues to enhance the performance and efficiency of distributed model training, driving innovation in the era of collaborative and decentralized machine learning.

Future Trends

It is crucial to stay ahead of the curve by understanding the upcoming trends that will shape the future. Let’s delve into some key trends that are expected to influence various industries in the near future.

Edge Computing Integration

Edge computing is gaining momentum as more devices are connected to the internet. By processing data closer to the source, edge computing reduces latency and enhances efficiency. This trend is set to revolutionize industries such as healthcare, manufacturing, and transportation by enabling real-time data processing and analysis. With the rise of Internet of Things (IoT) devices and the need for faster data processing, edge computing is becoming increasingly essential in modern technological architectures.

Enhanced Privacy Preservation

With data privacy becoming a growing concern, companies are focusing on enhancing privacy preservation measures. Technologies like differential privacy and homomorphic encryption are being developed to protect sensitive information while allowing for valuable insights to be extracted. Enhanced privacy preservation is set to be a key focus in the future, ensuring that data remains secure and protected. The implementation of privacy-enhancing technologies will be critical for businesses to maintain consumer trust and comply with evolving data protection regulations.

Automated Hyperparameter Tuning

Hyperparameter tuning plays a crucial role in optimizing machine learning models. As the volume of data continues to grow, manual hyperparameter tuning becomes increasingly challenging. Automated hyperparameter tuning, powered by artificial intelligence and machine learning algorithms, streamlines the process by efficiently finding the best hyperparameters for a given model. This trend is expected to revolutionize the field of machine learning, making model optimization more efficient and effective. With the increasing complexity of machine learning models and the need for rapid iterations, automated hyperparameter tuning will be vital for accelerating innovation in AI applications.

Embracing these future trends is essential for businesses and individuals looking to stay competitive in a rapidly evolving technological landscape. By integrating edge computing, enhancing privacy preservation measures, and adopting automated hyperparameter tuning, organizations can drive innovation, improve operational efficiency, and deliver enhanced experiences to their customers. Keeping abreast of these trends and leveraging them effectively will be key to success in the digital age.

The Future of Distributed Model Training

Distributed model training is poised to revolutionize the field of machine learning by enabling faster and more efficient training of complex models. As technology continues to advance, the scalability and speed of distributed training systems will only improve, leading to even greater breakthroughs in AI research and applications. Embracing this trend and investing in the necessary infrastructure will be crucial for organizations looking to stay competitive in the rapidly evolving landscape of artificial intelligence.