Welcome to the comprehensive guide on implementing Databricks Lakehouse! In today’s data-driven world, organizations are constantly seeking innovative solutions to manage and analyze their vast amounts of data efficiently. Databricks Lakehouse has emerged as a cutting-edge platform that combines the best features of data lakes and data warehouses, offering a unified and simplified approach to data management and analytics. This guide is designed to provide you with a step-by-step overview of how to effectively implement Databricks Lakehouse, from setting up the environment to optimizing data workflows.

Whether you are a data engineer, data scientist, or business analyst, this guide will equip you with the knowledge and tools necessary to leverage the power of Databricks Lakehouse effectively. Join us on this journey as we explore the key concepts, best practices, and practical tips to help you harness the full potential of Databricks Lakehouse for your organization’s data needs.

Assessing Data Readiness

Before embarking on the journey of implementing a Databricks Lakehouse, it is paramount to thoroughly evaluate the readiness of your data. This step is critical as it sets the foundation for a successful Lakehouse implementation. Let’s delve deeper into the key facets to consider during the data readiness assessment:.

Data Quality: Ensuring Accuracy and Relevance

One of the primary factors to evaluate is the quality of your data. It is imperative to ascertain that the data is not only accurate but also complete and relevant to the intended use cases. By conducting a comprehensive data quality analysis, you can identify and rectify any discrepancies or anomalies that might hinder the effectiveness of the Lakehouse.

Data Structure Evaluation: Aligning with Lakehouse Requirements

Another crucial aspect is to assess the structure of your data. Evaluate the format in which the data is currently stored, such as CSV, JSON, or Parquet, and ensure that it aligns seamlessly with the requirements of the Databricks Lakehouse. This alignment is vital for smooth data ingestion and processing within the Lakehouse environment.

Data Consistency Check: Ensuring Usability

In addition to quality and structure, data consistency plays a pivotal role in determining the usability of the data within the Lakehouse. Detecting any inconsistencies or discrepancies in the data early on can prevent downstream issues during analysis and reporting. Therefore, conducting a thorough data consistency check is essential.

Infrastructure Requirements for a Robust Setup

Setting up a Databricks Lakehouse necessitates a robust infrastructure that can support its operations effectively. When planning the infrastructure, consider the following key aspects:.

Compute Resources Allocation

Determine the optimal amount of computational power required based on the volume and complexity of data processing tasks. Adequate compute resources are essential for executing data transformations, machine learning models, and other analytical processes efficiently.

Storage Capacity Estimation

Estimate the storage requirements for housing both raw and processed data within the Lakehouse environment. Properly allocating storage capacity is crucial for accommodating data growth and ensuring seamless access to historical and real-time data.

Networking Capabilities for Seamless Communication

Ensure that your infrastructure is equipped with reliable and high-speed networking capabilities to facilitate seamless data transfers and communication between various components of the Lakehouse. A robust network infrastructure is vital for maintaining data consistency and minimizing latency.

Security Measures Implementation

Implement stringent security measures to safeguard sensitive data stored within the Databricks Lakehouse. Data encryption, access controls, and regular security audits are essential components of a comprehensive security strategy to protect against potential threats and breaches.

By meticulously assessing data readiness and meticulously planning the necessary infrastructure components, organizations can lay a solid groundwork for a successful Databricks Lakehouse implementation.

Steps for Implementing Databricks Lakehouse

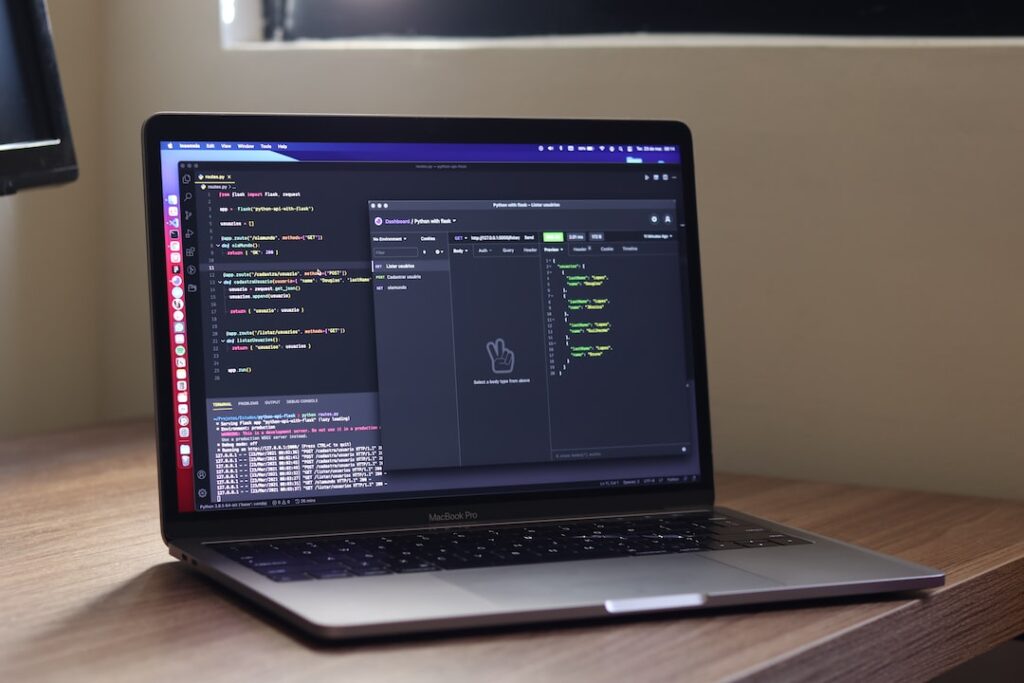

Setting up Databricks Environment

In this initial phase of implementing a Databricks Lakehouse, the focus is on creating a robust foundation for your data processing needs. Setting up the Databricks environment involves tasks such as provisioning the necessary resources, configuring security settings, and establishing access controls to ensure data privacy and integrity.

Ingesting and Transforming Data

Once the environment is set up, the next crucial step is ingesting and transforming data. Data ingestion involves loading data from various sources into the Databricks Lakehouse, while data transformation focuses on cleaning, structuring, and enriching the data to make it suitable for analysis. Implementing efficient data ingestion and transformation processes is key to generating valuable insights from your data.

Building Data Pipelines

Data pipelines play a vital role in orchestrating the flow of data within the Databricks Lakehouse. Designing and implementing robust data pipelines using Databricks tools like Apache Spark enables automation of data movement, transformation, and processing tasks. By building well-structured data pipelines, you can streamline your data workflows and ensure data consistency and reliability.

Implementing Data Governance

Data governance is a fundamental aspect of data management that focuses on ensuring data quality, compliance, and security. Establishing data governance policies within the Databricks Lakehouse involves defining data ownership, access controls, data lineage, and data quality standards. By implementing comprehensive data governance practices, organizations can enhance trust in their data assets and mitigate risks associated with data misuse or unauthorized access.

Optimizing Performance

Optimizing performance is critical for maximizing the efficiency of data processing and analysis tasks in the Databricks environment. Performance optimization techniques include tuning query execution, leveraging caching mechanisms, partitioning data for parallel processing, and utilizing advanced optimization features offered by Databricks. By continuously monitoring and fine-tuning performance parameters, organizations can achieve faster query processing and improve overall data processing throughput.

Ensuring Data Quality

Data quality assurance is essential for deriving accurate and reliable insights from data stored in the Databricks Lakehouse. Monitoring data quality metrics, implementing data validation checks, and establishing data quality monitoring processes are vital steps in ensuring data consistency and integrity. By employing data quality tools and practices, organizations can proactively identify and address data quality issues, thereby enhancing the trustworthiness of their analytical results.

Best Practices for Databricks Lakehouse

Data Security Measures

Data security is a critical aspect when it comes to managing sensitive data within a Databricks Lakehouse. Implementing robust security measures is essential to safeguard the integrity and confidentiality of the stored information. Encryption plays a key role in securing data at rest and in transit, ensuring that only authorized users can access the data. Access control mechanisms should be put in place to restrict unauthorized access and prevent data breaches. Compliance with data protection regulations such as GDPR and HIPAA is crucial to avoid legal implications and maintain trust with customers.

Monitoring and Troubleshooting

Monitoring and troubleshooting are indispensable practices to maintain the health and performance of a Databricks Lakehouse environment. Setting up comprehensive monitoring tools allows organizations to proactively identify issues and anomalies, enabling timely intervention to prevent potential disruptions. Leveraging logging mechanisms helps in tracking system activities, while alerts and performance tuning techniques aid in optimizing resource utilization and enhancing overall efficiency.

Scalability Considerations

Scalability is a key consideration for organizations dealing with large volumes of data in the Databricks Lakehouse. Factors such as data volume handling, resource allocation, and optimization strategies play a crucial role in ensuring seamless scalability. By adopting horizontal scaling approaches, organizations can distribute data processing workloads across multiple nodes, thereby enhancing performance and accommodating growing data demands. Vertical scaling, on the other hand, involves upgrading system resources to handle increased data processing requirements efficiently.

Collaboration and Knowledge Sharing

Fostering collaboration and knowledge sharing among data teams is vital for maximizing the benefits of Databricks Lakehouse. Features like collaborative notebooks enable team members to work together on projects, share insights, and provide feedback in real-time. Version control capabilities help in tracking changes made to data pipelines and analyses, ensuring transparency and accountability within the team. Integrations with popular tools and platforms further facilitate seamless collaboration and streamline workflows, ultimately enhancing productivity and innovation.

Ensuring Data Quality

Maintaining high data quality is essential for reliable decision-making and analysis within a Databricks Lakehouse environment. Implementing data quality checks, data profiling, and validation processes help in identifying and rectifying inconsistencies or errors in the data. By establishing data quality standards and regular monitoring protocols, organizations can ensure that the data stored in the Lakehouse remains accurate, complete, and consistent, enhancing the trustworthiness of analytical outcomes.

Cost Optimization Strategies

Optimizing costs associated with Databricks Lakehouse operations is crucial for maximizing ROI and efficiency. Employing cost monitoring tools and practices can help organizations track and analyze expenditure related to storage, compute resources, and data processing tasks. Implementing cost-effective storage solutions, leveraging auto-scaling features based on workload demands, and optimizing query performance can contribute to significant cost savings. Regular cost reviews and optimizations based on usage patterns and business requirements enable organizations to align their spending with strategic objectives and financial goals.

Continuous Training and Skill Development

Investing in continuous training and skill development for data professionals working with Databricks Lakehouse is essential for staying abreast of evolving technologies and best practices. Providing access to training resources, workshops, and certification programs can empower teams to leverage the full potential of the Lakehouse platform. Encouraging participation in industry events, conferences, and knowledge-sharing forums fosters a culture of learning and innovation within the organization. By nurturing a skilled workforce proficient in Databricks Lakehouse technologies, organizations can drive operational excellence, drive innovation, and maintain a competitive edge in the dynamic data landscape.

Future Trends in Databricks Lakehouse

In recent years, Databricks Lakehouse has emerged as a powerful solution for organizations looking to harness the potential of big data. By combining the best features of data lakes and data warehouses, Databricks Lakehouse offers a comprehensive platform for storing, managing, and analyzing vast amounts of data. As we look towards the future, several key trends are expected to shape the evolution of Databricks Lakehouse.

AI and ML Integration: Transforming Data Insights

One of the most exciting trends in Databricks Lakehouse is the seamless integration of artificial intelligence (AI) and machine learning (ML) capabilities. This integration revolutionizes data insights by enabling advanced analytics, predictive modeling, and automated decision-making processes within the Lakehouse environment. With AI and ML at the core of data operations, organizations can uncover valuable patterns, anomalies, and trends that drive innovation and competitive advantage.

Real-time Analytics: Empowering Agile Decision-Making

Another pivotal trend shaping the future of Databricks Lakehouse is the increasing emphasis on real-time analytics. Timely data insights are crucial for agile decision-making and staying ahead of the curve. Databricks Lakehouse empowers organizations with the ability to process and analyze data instantaneously, enabling real-time monitoring, trend identification, and rapid response to market shifts. By leveraging real-time analytics, businesses can enhance operational efficiency, customer experiences, and strategic planning.

IoT Data Processing: Unleashing the Potential of Connected Devices

The Internet of Things (IoT) continues to catalyze innovation in data processing, and Databricks Lakehouse is at the forefront of enabling organizations to harness the full potential of IoT data. With the exponential growth of connected devices and sensors, organizations are tasked with processing and deriving insights from vast streams of IoT data. Databricks Lakehouse provides a robust framework for scalable IoT data processing, facilitating real-time monitoring, anomaly detection, and predictive analytics. By leveraging IoT data within the Lakehouse environment, organizations can optimize operational processes, enhance predictive maintenance strategies, and unlock new revenue streams.

Embracing the Future: Innovating with Databricks Lakehouse

The future of Databricks Lakehouse is brimming with opportunities for organizations to innovate and thrive in the data-driven era. By embracing trends such as AI and ML integration, real-time analytics, and IoT data processing, businesses can unlock the full potential of their data assets, drive actionable insights, and propel growth. As Databricks Lakehouse continues to evolve, organizations that leverage its capabilities stand to gain a competitive edge, drive operational excellence, and chart a successful path towards digital transformation.

Conclusion

Implementing. Databricks Lakehouse Can revolutionize the way organizations manage and derive insights from their data. By combining the best features of data lakes and data warehouses, Databricks Lakehouse offers a scalable, cost-effective, and efficient solution for modern data analytics. Embracing this technology can lead to improved data quality, faster decision-making, and greater innovation across various industries. As organizations strive to become more data-driven, Databricks Lakehouse stands out as a powerful tool to unlock the full potential of their data assets.