The fusion of diverse artificial intelligence modalities is reshaping the way machines perceive and engage with the world. Through the integration of text, images, speech, and various data types, AI systems are experiencing a revolutionary transformation. The amalgamation of multiple sources of information allows for a more profound comprehension of intricate situations, resulting in more precise analyses and intelligent decision-making processes. This convergence of modalities has the potential to significantly enhance human-computer interactions and drive innovation across various sectors such as healthcare, autonomous vehicles, and customer service. The realm of multimodal AI unveils limitless possibilities for creativity and problem-solving, expanding the horizons of AI capabilities. Explore with us as we navigate through this transformative wave and discover the myriad opportunities that multimodal AI systems offer in shaping our technological landscape.

How Multimodal AI Works

Integration of Different Modalities

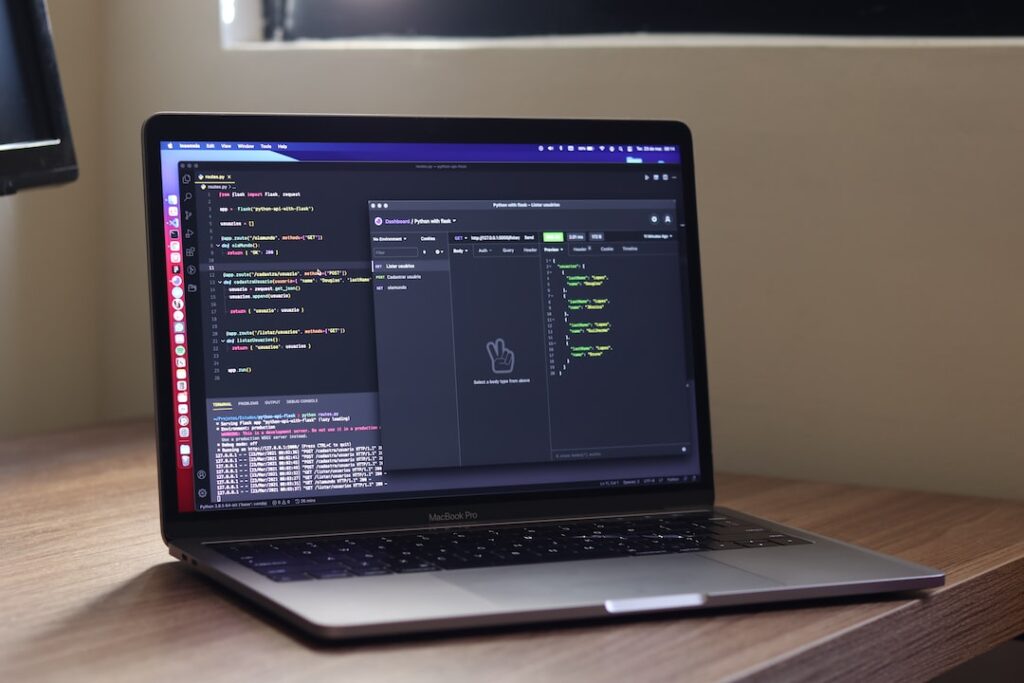

Multimodal AI operates by integrating diverse modalities, such as text, images, videos, and audio, to gain a holistic understanding of data. This integration allows AI systems to process information from various sources simultaneously, enabling a more comprehensive analysis. By combining textual data with visual and auditory inputs, multimodal AI can extract deeper insights and context, leading to enhanced decision-making capabilities across a wide range of applications.

Deep Learning and Multimodal Data Processing

Deep learning plays a pivotal role in the functionality of multimodal AI systems. Through deep neural networks, these systems can learn complex patterns and relationships within and across different modalities. The process involves extracting high-level features from each modality and merging them to create a unified representation that captures the essence of the data. Such deep learning architectures enable multimodal AI to understand context, semantics, and nuances, thereby improving its accuracy and performance in tasks like natural language processing, image recognition, and more.

Applications of Multimodal AI Systems

The versatility of multimodal AI systems extends to various industries, revolutionizing processes and enhancing user experiences. In healthcare, these systems aid in medical diagnosis by combining data from medical images, patient records, and diagnostic reports to provide comprehensive insights for healthcare professionals. Autonomous vehicles leverage multimodal AI for real-time decision-making based on inputs from cameras, LiDAR, radar, and other sensors, ensuring safe navigation and efficient operations on roads.

Furthermore, in customer service, multimodal AI enables personalized interactions through sentiment analysis, speech recognition, and image processing. By understanding customer queries across different modalities, businesses can offer tailored solutions and improve customer satisfaction. The educational sector benefits from multimodal AI by providing personalized learning experiences through adaptive content delivery based on student responses and engagement.

Multimodal AI also enhances security measures by integrating data from surveillance cameras, motion sensors, and access control systems to detect anomalies and potential threats in real-time. In the entertainment industry, these systems enable immersive experiences through enhanced content recommendation, emotion recognition, and interactive storytelling.

With its transformative potential across healthcare, transportation, customer service, education, security, and entertainment, multimodal AI continues to drive innovation and efficiency in diverse domains, promising a future where human-machine interactions are more intuitive, personalized, and impactful.

Challenges and Opportunities

In the realm of Multimodal AI, there exist both challenges that need to be surmounted and opportunities that can be capitalized upon. Let’s delve into some of the key aspects:.

- Overcoming Data Fusion Challenges

Data fusion is a critical aspect of Multimodal AI, where information from different modalities such as text, image, and audio needs to be integrated effectively. Challenges in this area include aligning disparate data types, dealing with missing or incomplete data, and ensuring data privacy and security. Overcoming these challenges requires advanced algorithms for feature extraction, data alignment, and fusion techniques. Additionally, advancements in federated learning and differential privacy can contribute to addressing privacy concerns in data fusion processes.

- Enhancing User Experience

Multimodal AI has the potential to revolutionize user experience across various applications such as virtual assistants, autonomous vehicles, and healthcare. By leveraging multiple modalities, systems can better understand user intent, provide more personalized interactions, and offer richer content experiences. However, achieving these enhancements requires a deep understanding of user preferences, seamless integration of modalities, and continuous learning and adaptation. Embracing human-centered design principles and conducting user studies can further enhance the user experience in multimodal systems.

- Future Trends in Multimodal AI

Looking ahead, the future of Multimodal AI holds immense promise. Some of the key trends that are expected to shape the field include the rise of self-supervised learning for multimodal tasks, the integration of symbolic reasoning with deep learning approaches, and the development of more interpretable and explainable models. Additionally, advancements in hardware acceleration, such as specialized AI chips, and the proliferation of edge computing are poised to drive further innovation in Multimodal AI. Exploring the potential applications of multimodal AI in emerging fields like augmented reality and robotics can open up new avenues for research and development.

While challenges in data fusion persist, the opportunities for enhancing user experience and exploring future trends in Multimodal AI are vast. By addressing these challenges and leveraging the opportunities presented, researchers and practitioners can unlock the full potential of Multimodal AI for a wide range of applications. The interdisciplinary nature of Multimodal AI, combining computer vision, natural language processing, and audio processing, underscores the need for collaboration across domains to drive innovation and create impactful solutions.

Ethical Considerations in Multimodal AI

Privacy Concerns

Where data from multiple sources such as images, audio, and text are combined, privacy concerns become paramount. It is crucial to ensure that the privacy of individuals is respected and protected throughout the entire data processing pipeline. This involves implementing robust data anonymization techniques, obtaining informed consent from data subjects, and securely storing and handling sensitive information. Privacy regulations such as GDPR and CCPA play a significant role in dictating how personal data should be handled in AI systems. Companies and developers must adhere to these regulations to avoid legal consequences and maintain trust with users.

Bias and Fairness in Multimodal AI

Another important ethical consideration in multimodal AI is the presence of bias and ensuring fairness in algorithmic decision-making. Biases present in training data can lead to skewed or discriminatory outcomes, especially when dealing with diverse datasets. It is essential to continuously monitor and address biases in AI models, as well as strive for fairness and transparency in the development and deployment of multimodal AI systems. Techniques like fairness-aware modeling and bias detection algorithms can help in identifying and mitigating biases. Moreover, promoting diversity and inclusivity in AI teams can lead to more equitable AI solutions that consider a broader range of perspectives and experiences.

Data Protection and Security

Apart from privacy concerns, data protection and security are crucial aspects of ethical considerations in multimodal AI. Ensuring the integrity and confidentiality of data is essential to prevent unauthorized access or data breaches. Implementing encryption, secure data transfer protocols, and regular security audits can help in maintaining data security. Additionally, building AI systems with built-in security features and ensuring compliance with industry standards can enhance the overall security posture of multimodal AI solutions.

Accountability and Transparency

Finally, accountability and transparency are key principles that should guide the development and deployment of multimodal AI systems. Companies and developers should be transparent about how AI systems operate, including the data sources used, the decision-making process, and the potential implications of AI-generated outputs. Establishing clear lines of accountability and responsibility for AI outcomes is essential to address ethical concerns and ensure that AI systems are used responsibly and ethically.

Conclusion

The potential of multimodal AI systems to revolutionize various industries is truly remarkable. By combining different modes of data such as text, images, and speech, these systems can offer more comprehensive insights and solutions. As technology continues to advance, the applications of multimodal AI systems will only expand, leading to more efficient processes, improved user experiences, and innovative solutions to complex problems. Embracing and further developing these systems will undoubtedly shape the future of AI technology and its impact on society.