Unlock the full potential

Unlock the full potential of a leading data engineering and analytics platform with expert strategies and best practices. Explore valuable insights and tips to enhance efficiency, scalability, and performance. From optimizing data processing pipelines to leveraging advanced machine learning capabilities, this guide covers a range of techniques to elevate your implementation. Whether you’re a beginner or experienced user, stay ahead in the world of big data and analytics by maximizing the capabilities of this powerful platform.

Key Components of a Successful Databricks Implementation

Choosing the Right Databricks Deployment Option

In the realm of data analytics and processing, choosing the appropriate Databricks deployment option is a pivotal decision. Databricks offers deployment choices on major cloud platforms like AWS, Azure, and Google Cloud, each with its unique set of features and integrations. When selecting the right deployment option, organizations need to carefully evaluate factors such as cost-effectiveness, scalability, performance, and compatibility with existing systems. Understanding the specific requirements of the project and aligning them with the capabilities of the deployment options is essential for a successful implementation.

Optimizing Databricks Clusters for Performance

Optimizing Databricks clusters is fundamental to achieving optimal performance in data processing and analytics tasks. By fine-tuning cluster configurations, including cluster size, instance types, and auto-scaling settings, organizations can enhance the efficiency and cost-effectiveness of their data workflows. Additionally, leveraging advanced features like caching, parallel processing, and cluster libraries can further boost the performance of Databricks clusters. Continuous monitoring and optimization of cluster performance are key practices to ensure that the system operates at peak efficiency.

Implementing Security Best Practices in Databricks

Ensuring robust security measures is a cornerstone of any data platform implementation, and Databricks is no exception. Implementing security best practices involves establishing stringent access controls, data encryption mechanisms, and comprehensive monitoring capabilities. Organizations should enforce data governance policies to safeguard sensitive information, maintain compliance with data protection regulations, and mitigate security risks. By conducting regular security audits, staying updated on security trends, and fostering a culture of security awareness among users, organizations can fortify the security posture of their Databricks environment.

Scalability and Flexibility

Scalability is another crucial aspect of a successful Databricks implementation. Organizations should design their Databricks architecture with scalability in mind, allowing the system to seamlessly expand as data volumes and processing requirements grow. By leveraging features like dynamic allocation and workload isolation, organizations can adapt their Databricks environment to evolving business needs without compromising performance or efficiency. Moreover, integrating Databricks with complementary technologies such as Apache Spark and MLflow can extend the platform’s capabilities and enhance its flexibility in handling diverse data workloads.

Continuous Monitoring and Optimization

To maintain peak performance and efficiency, continuous monitoring and optimization of the Databricks environment are essential. Organizations should establish monitoring protocols to track key performance metrics, detect anomalies, and troubleshoot issues proactively. By leveraging automation tools, organizations can streamline cluster management tasks, optimize resource utilization, and ensure that the Databricks environment operates at peak efficiency. Regular performance tuning, capacity planning, and workload optimization are critical practices to maximize the value derived from Databricks and drive business outcomes.

Training and Skill Development

Investing in training and skill development is paramount to unlocking the full potential of Databricks. Organizations should provide comprehensive training programs to empower users with the knowledge and skills required to leverage Databricks effectively. By fostering a culture of continuous learning and skill enhancement, organizations can drive innovation, improve productivity, and maximize the return on investment in Databricks. Additionally, promoting collaboration and knowledge sharing among Databricks users can foster a community of practice that accelerates skill development and drives organizational success.

Conclusion

A successful Databricks implementation hinges on a strategic approach to choosing deployment options, optimizing cluster performance, and implementing robust security measures. By prioritizing scalability, flexibility, continuous monitoring, and skill development, organizations can harness the full power of Databricks to drive data-driven insights, innovation, and competitive advantage. With a well-executed Databricks implementation strategy, organizations can unlock new opportunities for growth, efficiency, and success in the fast-evolving landscape of data analytics and machine learning.

Best Practices for Databricks Implementation

Data Ingestion Strategies for Databricks

When it comes to data ingestion in Databricks, it is essential to consider factors such as data volume, frequency, and sources. Implementing efficient data ingestion strategies ensures smooth data processing and analysis. Some best practices include:.

-

Batch Processing: Utilize batch processing for large volumes of data that do not require real-time analysis.

-

Stream Processing: For real-time data analysis, consider implementing stream processing using tools like Apache Kafka.

-

Data Partitioning: Partition data to optimize query performance and parallel processing.

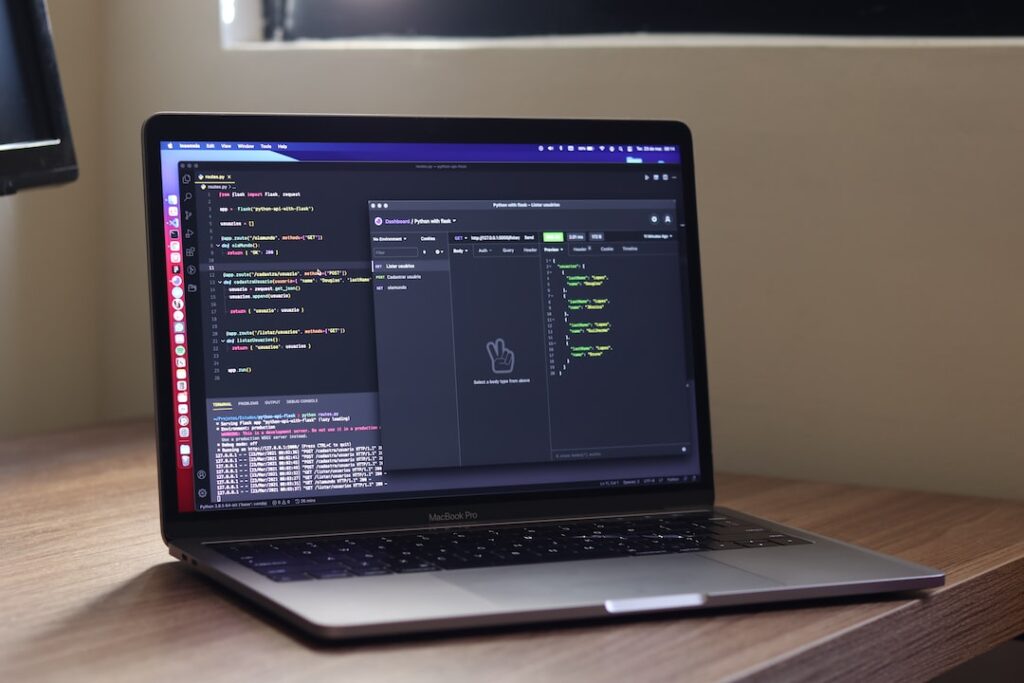

Utilizing Databricks Notebooks Efficiently

Databricks notebooks are powerful tools for data exploration, visualization, and collaboration. To make the most out of Databricks notebooks, consider the following best practices:.

-

Code Reusability: Modularize your code into functions or libraries for easy reuse across multiple notebooks.

-

Markdown Documentation: Use Markdown cells to provide detailed explanations, instructions, and insights alongside your code.

-

Version Control: Leverage version control systems like Git to track changes, collaborate with team members, and revert to previous versions if needed.

Monitoring and Troubleshooting in Databricks

Monitoring and troubleshooting are crucial aspects of maintaining a healthy Databricks environment. Follow these best practices to ensure efficient monitoring and proactive issue resolution:.

-

Cluster Monitoring: Regularly monitor cluster performance, resource utilization, and job execution to identify bottlenecks or issues.

-

Logging and Alerting: Set up logging and alerting mechanisms to receive notifications for critical events, errors, or performance degradation.

-

Performance Optimization: Continuously optimize queries, code, and cluster configurations to improve performance and reduce costs.

Implementing these strategies can significantly enhance the effectiveness and productivity of your Databricks implementation. To further optimize your usage of Databricks, consider exploring advanced features such as MLflow integration for machine learning model tracking, Delta Lake for reliable data lakes, and Databricks SQL Analytics for interactive querying capabilities. By staying updated on Databricks’ latest features and continuously refining your implementation practices, you can stay ahead in the rapidly evolving data analytics landscape. Remember, adapting to new technologies and best practices is key to unlocking the full potential of Databricks for your data-driven initiatives.

Advanced Techniques to Supercharge Your Databricks Implementation

Leveraging MLflow for Streamlined Machine Learning Workflows

Incorporating MLflow into your Databricks environment can revolutionize how you manage machine learning workflows. MLflow offers a comprehensive platform for the end-to-end machine learning lifecycle, providing capabilities for experiment tracking, reproducible runs, model packaging, and model deployment. By utilizing MLflow’s tracking features, data scientists can systematically monitor experiments, compare results, and reproduce successful models with ease. This integration not only enhances collaboration within the team but also fosters a culture of experimentation and continuous improvement. Moreover, leveraging MLflow’s model serving capabilities can facilitate seamless deployment and monitoring of machine learning models in production environments, ensuring efficient model lifecycle management and performance tracking.

Enhancing Connectivity: Integrating Databricks with External Tools and Services

Seamless integration of Databricks with external tools and services amplifies the analytical capabilities of your data platform. By connecting Databricks to a diverse array of tools such as Apache Kafka for real-time data streaming, Tableau for interactive data visualization, AWS S3 for scalable storage, and more, you create a versatile ecosystem that optimizes data processing and insights generation. This interoperability enables data engineers and analysts to harness the strengths of different technologies, resulting in a robust analytics infrastructure that drives informed decision-making and innovation. Furthermore, integrating with external services like Azure Data Factory for data orchestration or Azure Machine Learning for advanced analytics can expand the functionalities of Databricks, empowering organizations to derive deeper insights and drive business growth through data-driven strategies.

Driving Efficiency Through Automation in Databricks Workflows

Automation lies at the core of operational efficiency in Databricks workflows. By embracing automation tools and scheduling mechanisms within Databricks, organizations can streamline data pipelines, automate routine tasks, and maximize resource utilization. Automated workflows not only reduce manual errors but also enhance the reliability and scalability of data processing operations. With automated job triggering, event-based workflows, and resource optimization, teams can focus on strategic initiatives, data exploration, and model refinement, accelerating the pace of innovation and time-to-insights. Embracing automation not only increases productivity but also sets the foundation for scalable and sustainable data practices, empowering organizations to stay competitive in the rapidly evolving data landscape. Additionally, implementing automated monitoring and alerting mechanisms in Databricks can proactively detect anomalies, improve system performance, and ensure data quality, further enhancing the operational efficiency and reliability of data workflows.

Case Studies: Successful Databricks Implementations

Real-world Examples of Companies Benefiting from Databricks.

Lessons Learned from Successful Databricks Deployments.

We delve into the realm of successful Databricks implementations through insightful case studies. Discover how leading companies have leveraged Databricks to drive innovation, streamline processes, and achieve remarkable results. From improved data analytics to enhanced machine learning capabilities, learn how organizations are harnessing the power of Databricks to stay ahead in the competitive landscape. Gain valuable insights into the lessons learned from these successful deployments and understand the key factors that contribute to their effectiveness. Join us as we explore the impact of Databricks through real-world examples and uncover best practices for maximizing the potential of this advanced analytics platform.

Case Studies: Real-World Success Stories

Exploring Databricks in Action: Company Transformations.

In this comprehensive analysis of successful Databricks implementations, we spotlight real-world success stories that showcase the transformative power of this cutting-edge platform. From leading tech giants to emerging startups, these case studies provide a deep dive into the strategic deployment of Databricks and its significant impact on business outcomes. Learn how organizations across diverse industries have revolutionized their data strategies, accelerated decision-making processes, and unlocked new opportunities for growth through Databricks.

Lessons Learned: Key Insights and Best Practices

Unpacking Success: Strategies for Effective Databricks Deployments.

Beyond the success stories lie invaluable lessons learned from the front lines of Databricks implementations. Discover key insights and best practices that can guide your organization towards a successful deployment of this advanced analytics tool. From overcoming implementation challenges to optimizing performance and scalability, gain a holistic understanding of the factors that drive successful Databricks projects. Learn from the experiences of industry leaders and experts to navigate the complexities of data analytics and machine learning with confidence and expertise.

The Evolution of Data Analytics with Databricks

The landscape of data analytics has been revolutionized by the advent of Databricks, a unified analytics platform that combines data engineering, data science, and business analytics. By examining the evolution of data analytics with Databricks, we witness a shift towards more efficient and collaborative data processes. Organizations are now empowered to seamlessly integrate data from various sources, perform advanced analytics tasks, and derive actionable insights in real-time. The scalability and flexibility of Databricks have transformed how businesses approach data-driven decision-making, paving the way for innovation and growth.

Driving Innovation and Business Value

One of the key highlights of successful Databricks implementations is the tangible impact on innovation and business value. Through real-world case studies, we witness how companies have leveraged Databricks to drive innovation across multiple fronts. From developing cutting-edge machine learning models to optimizing data pipelines for improved efficiency, organizations are harnessing the capabilities of Databricks to innovate faster and deliver enhanced value to their customers. By embracing a data-centric approach powered by Databricks, businesses can unlock new opportunities, drive competitive advantage, and foster a culture of continuous innovation.

Empowering Data Teams for Success

Successful Databricks deployments are not just about technology; they are also about empowering data teams for success. By providing a collaborative and unified platform for data engineers, data scientists, and business analysts, Databricks fosters cross-functional collaboration and knowledge sharing. Data teams can now work seamlessly across departments, leverage shared data sets, and collaborate on projects in real-time. This collaborative approach not only accelerates the pace of innovation but also enhances the overall productivity and effectiveness of data teams. Empowered with the right tools and resources, data teams can drive meaningful insights, make informed decisions, and lead their organizations towards data-driven success.

The Future of Data Analytics: Trends and Predictions

As we look to the future of data analytics, it is evident that Databricks will continue to play a pivotal role in shaping the landscape of advanced analytics. Emerging trends such as augmented analytics, automated machine learning, and predictive analytics are poised to drive further innovation in the field of data analytics. With Databricks at the forefront of this evolution, organizations can expect to see more sophisticated data solutions, enhanced AI capabilities, and greater integration of analytics into business processes. By staying abreast of these trends and predictions, businesses can position themselves for success in a data-driven world powered by Databricks.

Conclusion

Successful Databricks implementations are not just about adopting a new analytics platform; they are about transforming the way organizations leverage data to drive innovation, make strategic decisions, and create business value. By exploring real-world case studies, lessons learned, and best practices, companies can unlock the full potential of Databricks and harness its power to stay ahead in a competitive market. As we look towards the future of data analytics with Databricks, it is clear that the possibilities are endless, and the impact on business outcomes is profound. Embrace the transformative power of Databricks, empower your data teams, and embark on a journey towards data-driven success in the digital age.

Conclusion

By implementing the best practices and tips outlined in this blog, you can supercharge your Databricks implementation and optimize your data analytics processes for increased efficiency and productivity. Remember to continually assess and refine your strategies to harness the full potential of Databricks and stay ahead in the rapidly evolving data landscape.